How to use Talend in Hadoop?

Talend with Hadoop Online Training in India Hyderabad

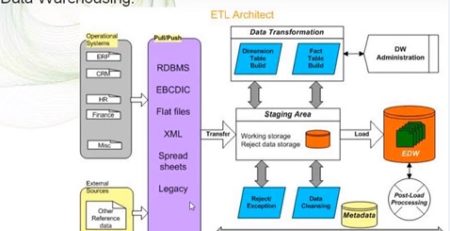

Talend is an ETL tool used to extract, transform and load the desired business data into a data warehouse system from where you can further access it. This article aims to pose out the method to implement Talend in data integration system into Hadoop and thereby create a data storage system that gives the comprehensive knowledge of further analysis.

To set up a Talend ETL in Hadoop, you are recommended to follow the underlying steps.

- Set up Hadoop Cluster

The place where you want to load all your data can be a cloud or in-house storage, based on the type of data you wish to investigate. Set up the precise Hadoop cluster based on your needs. Also, check whether your data can be subsequently moved to the cloud and analyzed therein. Rectify whether you can use the tested data for development.

- Form a connection among data sources

The Hadoop System uses numerous open-source technologies. These systems complement and increase the data handling capacities and allow you to connect different data sources. To connect various data sources, you need to check the type of data, amount, and rate of new data generated by the system. You can achieve more fascinating business insights with the integration of business intelligence tools.

- Formulate Metadata

Hadoop can store data, but it’s up to you how you use it. Define the precise semantics and structure of data analytics that you wish to implement. Clarifying the process will aid you in the transformation of data as per your needs based on metadata. This removes the vagueness of the looks of the field and its generation.

- Create ETL employment

Now target your focus on extracting, transforming, and loading the data from various sources. The decision of the technology to use is based on the data set and transformations. Rectify your data integration job as a batch job or streaming job.

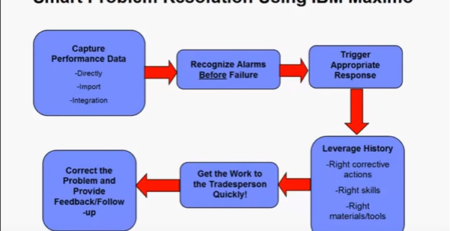

- Generating workflow

Create the desired workflow with multiple ETL jobs. Once you have done this, a data storage unit is created. After that, the data will be available for further analysis.